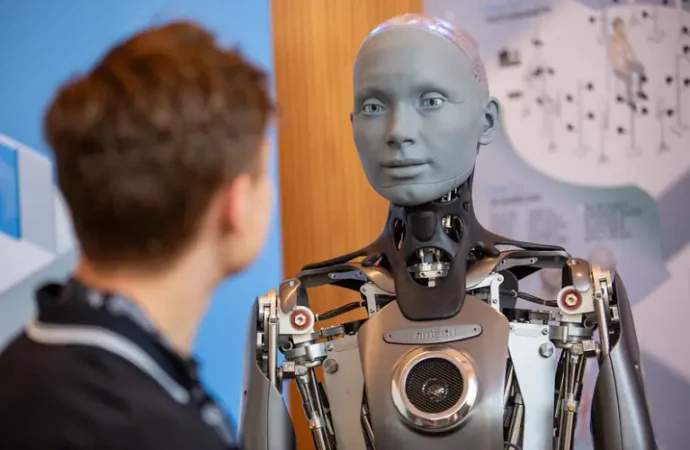

Several weeks ago, Melania Trump garnered attention for proposing an innovative educational methodology: robot education. At the “Fostering the Future Together Global Coalition Summit,” she was escorted by a humanoid, “American-made” robot as she presented the potential luster of AI’s future: humanoid robots could provide a “personalized” education, reminiscent of the tutoring models of centuries gone by.

Melania asked her audience to envision a robot called Plato through whom “access to the classical studies is now instantaneous.” She continued: “Humanity’s entire corpus of information is available in the comfort of your home. Plato will provide a personalized experience, adaptive to the needs of each student. Plato is always patient, and always available.”

Forget about the fact that an “always available” robot sounds somewhat ominous. Instead, Melania appealed to the virtues lauded by the corporate world, “analytic skills and problem solving,” as well as “deep critical thinking and independent reasoning abilities.” Children who learn from such robots, she maintained, could have a “more well-rounded lifestyle,” including more time for play and extracurriculars.

Can Robots Really Teach?

Melania’s proposition sounds similar to programs already in operation, such as homeschooling platforms that utilize AI or the in-person Alpha Schools, where students, under the direction of human guides, spend about two hours daily on schoolwork, thanks to personalized AI tutors. Where her proposition differs is in the use of a three-dimensional humanoid robot, not merely a web-based persona, as tutor. Regardless, Melania’s “Plato” and existing AI education models both spring from a fundamental misunderstanding of human nature and education.

Any education model that tasks AI with teaching or training human beings undermines the fundamentally relational nature of humans. This is merely one aspect of its danger, but a significant one. Human beings require physical interaction and relationship with other humans in order to flourish, whether in spiritual, emotional, or intellectual areas of life.

Without a certain amount of relational input, whether from classmates in a traditional classroom, a homeschooling mother, or a private (human!) tutor, learning becomes an isolating and Sisyphean endeavor. This is one reason why programs of individual “self-education” in the style of “Good Will Hunting” require a rare and precocious personality to be effective.

Nowhere is the need for relational input in education more evident than in research about COVID. When lockdowns and mandates pushed institutions toward online and asynchronous classes, student outcomes suffered. Students simply did not learn as much when their education was mediated by screens, video calls, and online discussion boards as when education was mediated by physical interaction. While students have returned to physical classroom since COVID, student outcomes continue to suffer as “heightened absenteeism” and “educational technology” gain steam. While computer-based learning and educational technology can be utilized for good in some circumstances, as in specialized degree programs, they are not wise pedagogical models when applied broadly.

Education Should Be Communal

Our need for human interaction is not a “bug” that can be fixed by increased exposure to or refinement of computer-based learning or humanoid systems. It is part of our nature: from the Garden of Eden onward, it is clear that “it is not good for man to be alone” – that we are designed for fellowship with each other and God.

We are, after all, not computers ourselves, however much our minds and bodies follow discernible patterns. We are living, breathing, earthly, fleshy creatures. “It is easy for me to imagine,” Wendell Berry famously wrote, “that the next great division of the world will be between people who wish to live as creatures and people who wish to live as machines.”

AI education requires a mechanistic view of man. It outsources human tasks of teaching, guiding, conversing, and correcting to a non-human entity, thus treating the student as a machine for knowledge accumulation rather than a creature needing formation and direction.

In this way, the faulty pedagogical theory behind AI education is also evident. AI education assumes that the end of education is the mere acquisition of knowledge, which, in this framework is “an inert substance that can be delivered by robots,” as Mary Harrington recently wrote. If the primary aim of education is for students to learn facts, then robots could be useful. They could even, in some cases, make for good teachers, better at least than the forgetful grandfather who worked as a professor for 40 years or the eager but unpracticed recent graduate new to the elementary school.

But knowledge is not an inert substance composed of discrete facts, and education aims at more even than knowledge.

Robots Can’t Develop the Whole Person

If the aim of education is wisdom and the shaping of a person, then people will be needed, body and soul. If the aim of education is to, as John Milton wrote, “repair the ruins of our first parents,” or to, as Hugh of St. Victor wrote, “restore within us the divine likeness,” then students will not only need people, but those whose souls seek the true, good, and beautiful and their source in God alone.

“Plato,” however patient he may be, will be no match for a flesh-and-blood human teacher, with all his faults, who loves his students and directs them toward divine wisdom.

—

This article was made possible by The Fred & Rheta Skelton Center for Cultural Renewal.

Image credit: Flickr-UN Geneva, CC BY-NC-ND 2.0

Leave a Comment

Your email address will not be published. Required fields are marked with *