“All men by nature desire to know,” says Aristotle at the beginning of his Metaphysics. This is a natural, healthy, even virtuous, longing in the human heart. But it can also get us into trouble when that desire is corrupted. ChatGPT and similar AI technologies are powerful information tools, but their use does not come without risk of just such a corruption of human knowing.

While the pursuit of truth out of love for it and in order to live a good life brings out our humanity, seeking the wrong kind of knowledge or seeking knowledge in the wrong way ruins the human mind and directs us toward evil. The Medievals called the former desire for knowledge the virtue of studiositas and the latter type curiositas. Curiositas takes different forms: Sometimes we seek knowledge of one element of reality while disregarding the bigger picture; we may pursue unimportant knowledge that doesn’t help us live better lives; or again we may seek knowledge of things we shouldn’t know or try to gain it by forbidden means; worst of all, we may seek knowledge only because of the power it gives us over others.

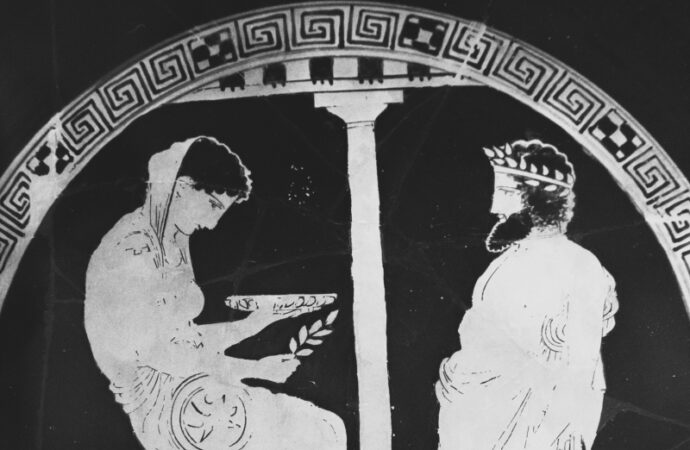

Humanity has an extended history of desiring to know things we can’t or shouldn’t know. The development of AI technologies such as ChatGPT is only the latest attempt to gain or generate information in unnatural ways in a long series of such attempts that trace back to at least the time of the Oracle at Delphi in ancient Greece. In fact, our relationship to AI bears disturbing similarities to the Greeks’ relationship to the Oracle.

The shrine to Apollo at Delphi, called the “navel of the world,” was the most important shrine in the Greek world. Delphi maintained its independence and wasn’t attached to any of the major Greek city-states, such as Sparta or Athens. In theory, it remained neutral and accessible to many people. The Greeks believed that the god Apollo gave messages through his priestess, the Pythia, or Oracle of Delphi. Here, seemingly, was an almost infinite wealth of knowledge and secrets normally hidden to mankind. Visitors could tap into a level of insight far beyond the human.

That temptation—access to knowledge beyond the normal human scope—proved irresistible to many.

But knowledge is perilous. A perusal of the writings of Herodotus or Thucydides reveals numerous occasions where visitors to the Oracle received ambiguous or even downright misleading answers to their questions—sometimes leading to their death or the destruction of their kingdom. Take King Croesus of Lydia, for example, who asked the Oracle if he should make war on the Persians. The Oracle responded that if he made war on the Persians, he would destroy a mighty empire. Gleefully, Croesus began his invasion, only to realize later that the empire he would destroy was his own. On another occasion, the Oracle seemed to provide contradicting predictions about the outcome of the Battle of Salamis, but these types of incidents didn’t seem to shake the Greeks total, blind confidence in the Oracle.

Sometimes, in seeking to avoid the fate predicted by the Oracle, people fulfilled it. A famous literary exampled occurs when, in Oedipus Rex, Oedipus asks the Oracle about his parentage only to hear the dire prediction that he will kill his father and marry his mother. Oedipus leaves his home in order to avoid such a fate, but it is this very decision that leads him to unwittingly encounter his biological parents and fulfil the prophecy.

Of course, this story is fiction, and even some of the purportedly historical accounts from Herodotus or Thucydides may be doubtful. But the Greek tales about the Oracle (whether legendary or not) and the reality of their complex relationship with prophecy continuously reinforces a fundamental truth: Too much knowledge, especially obtained in the wrong way, outside of normal human perception and cognition, can betray us.

This brings us back to AI. We can ask ChatGPT any question and receive a detailed, convincing answer. Yet the responses of this modern oracle, like those of its ancient predecessor, can’t be trusted. Sometimes, ChatGPT is just flat-out incorrect in its answers, even to a potentially dangerous degree, as could be in the matter of medical advice. Further, a recent report indicates that ChatGPT possesses a left-wing bias: The program is more tolerant of hateful comments directed at conservatives or Republicans than those directed at liberals or Democrats, for example.

On the practical level, this means that those who are turning to AI for their information needs may be easily deceived. Students who turn to the program as a quick and easy way to “write” papers or companies that use AI to generate online content can have their plans backfire. “Knowledge” is indeed perilous.

But the problem is bigger than mere incorrect answers. True, in its ability to collect, process, and generate information, AI offers us distinct advantages and powers unknown to our ancestors. It can solve problems for us and answer critical questions in milliseconds. But it’s all just too easy, too convenient. The piper must be paid, sooner or later. We all know that when someone offers you a magic pill that will make all your troubles go away, there must be a catch somewhere. Drugs promise us artificial, chemically induced happiness—but we know the reality is not happiness but greater misery. The quick fix is never a true fix.

It’s possible that in our ever-increasing efforts to achieve efficiency, comfort, and speed through technology that we risk losing something irretrievable along the way. Have we not dared too much in going beyond mere physical comfort and trying to streamline human thought itself, human conversation and language, these aspects of life so intimately tied to our rationality and thus our nature? If we outsource our thinking, we are outsourcing our humanity.

And sometimes I wonder: Are we really so different from the superstitious Greeks of old? Or does our superstition just take a different form? Modern people speak of the capabilities of AI with a kind of hushed awe. We smile at the “ignorant” Greeks bowing down in reverence to gods of their own making, but we are in danger of doing the same with our machine creations. Modern society’s infatuation with, reverence for, and total confidence in “science” and technological advancement—manifested in many people’s attitudes toward AI—isn’t so far from the Greek’s worship of Apollo and the treacherous messages of his Oracle.

—

Image credit: Wikimedia Commons-Zde, CC BY-SA 4.0

1 comment

1 Comment

Htos1av

April 9, 2023, 6:09 pm"indicates that ChatGPT possesses a left-wing bias: The program is more tolerant of hateful comments directed at conservatives or Republicans than those directed at liberals or Democrats, for example.

On the practical level, this means that those who are turning to AI for their information needs may be easily deceived."

Salami? BALONEY!

REPLY